Perfecting the Map

Proxy War Part 5: the mark scheme, the 1:1 map, and what we lose when we hand our marking to a machine

In his (extremely) short story, On Exactitude in Science, Jorge Luis Borges describes a guild of cartographers whose desire to categorise and codify the world leads them to create ever larger and more detailed maps of their empire. Driven by their geographical discipline, they eventually create a map which is so detailed it is exactly the same size as the territory it describes. Subsequent generations felt the map was useless so they ‘delivered it up to the Inclemencies of Sun and Winters’ where it was left to rot.

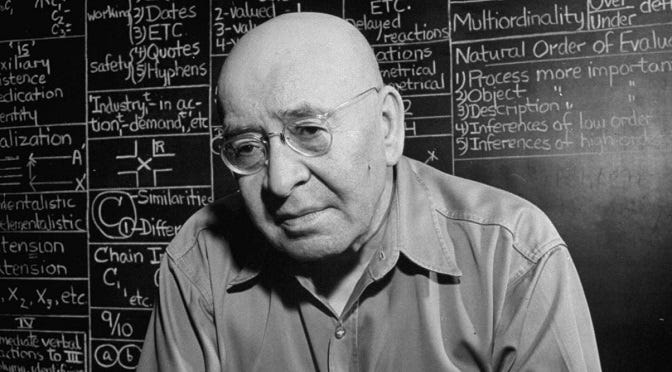

Borges’ story is a thought experiment derived from Alfred Korzybski’s famous line: ‘The map is not the territory’. That’s the bit that’s always remembered and printed on t-shirts and tote bags but it’s useful to note the full quotation from Korzybski’s seminal 1933 work Science and Sanity:

A map is not the territory it represents, but, if correct, it has a similar structure to the territory, which accounts for its usefulness.

He is suggesting that maps, or other proxies, are useful as long as they preserve their structural similarity to the reality they represent and the words ‘if correct’ are important because they imply a warning which Korzybski develops in the book. Namely, they can become dangerous if the structural connection breaks down or if we forget the distinction between the two. He argued that words carry enormous suggestive power. Once a label is attached to something, people respond to the label rather than the thing. Essentially, they navigate by the map without checking it against the terrain.

Mark Monmonier points out in his 1991 book How to Lie with Maps that every map must ‘lie’ to a certain extent, either by simplification or, like the London Underground map, by subtle manipulation in order to aid navigation. We are used to a similar idea in education.

The things we should care most about - genuine, deep understanding, the ability to think and to imagine - happen under the surface, inside a student’s head so we have to use a proxy, a simplified map so we can navigate their educational journey together.

The mark scheme is education’s answer to the proxy problem within the sphere of assessment. It is, by definition, a simplification of what is really going on in the brain, but we hope it will give us enough fixed points to ensure we are heading in the right direction.

It promises reliability. We believe the same standard will be applied consistently, regardless of who is marking and it feels like a solution because it converts the invisible (quality of thinking and understanding) into the visible (compliance with criteria). While we expect reliability, we should acknowledge that, especially in disciplines where there is implicit subjectivity, they leave some wiggle room, some space for professional judgement and that is where their validity lies.

Of course, the reality is not always as neat as this. I remember my first A-Level examiners’ standardisation meeting where we spent a (very long) day marking exemplar papers to ensure we were all applying the mark scheme in the same way and, by way of final confirmation, had to submit our marks to the last paper without discussion or collusion. The look of helpless desperation on the face of the Chief Examiner as our marks ranged from a C to a top A haunted me throughout the marking period. I guess some maps are better drawn than others and every map requires that the reader holds it the right way up.

Where did mark schemes come from — and what problem were they actually solving?

From my work in schools shaping AI strategy, it is clear that automated marking tools are dominating teachers’ thinking. The decisions being made right now about AI and assessment will shape education for a generation. To make them well, we need to understand that the mark scheme we are rushing to automate has a history, and that history is a warning.

The story begins not with mark schemes at all, but with what assessment was designed to do long before mark schemes existed.

Before the Oxford and Cambridge Local Examinations of 1858, there was essentially no external assessment of secondary-level learning in England at all. Admission to Oxbridge was dependent largely on you being able to afford the exorbitant fees and belonging to the Anglican faith. The assessment was not of learning but of religious and social conformity.

This was in stark contrast to the picture in Scotland where the ancient universities had no entrance examination at all; you paid your lecture fees as you went and attended if you could. This gave rise to the idea of the lad o’pairts, the boy of modest means who was nevertheless able to achieve great academic success. While the idea was doubtless romanticised, and you may well note that there was no lass o’pairts, it does explain why so many of the great thinkers of the Enlightenment were Scottish and of humble birth.

The 1858 Local Examinations were created for two reasons: to give provincial grammar schools a means of demonstrating their quality, and to extend the idea of open competitive merit into a society still organised largely by birth. While they were certainly less arbitrary than patronage and less exclusive than religious conformity, the exams were conducted in the register and vocabulary of the educated middle-class and rewarded those students who were able to replicate those features. The proxy was contaminated from its inception by the cultural capital required to perform well in it.

Into the twentieth century and we see the proxy tainted further by Francis Galton’s ideas of eugenics which were operationalised in an educational setting by his devotee, Sir Cyril Burt. Burt shared Galton’s conviction that intelligence was a fixed, biological trait inherited from one’s parents, much like eye color. He believed that the environment (teaching, home life, nutrition) had almost no impact on a child’s fundamental “mental capacity.” His legacy was the 11-plus exam which, on the basis of their performance on 4 papers taken on one or sometimes two days, sorted children into those capable of an academic grammar school education, those suited to a vocational education at a ‘Secondary Technical’ and the remaining 75-80% who were consigned to a Secondary Modern. As so few Secondary Technicals were built, it essentially became a binary decision.

Given that Burt’s practices were based on scientific ‘evidence’ that was, at best, highly questionable and, more likely, fraudulent, it is shocking that he had such a significant impact on the lives of generations of children.

In 1951, the O-Level replaced the grouped School Certificate with individual subject qualifications. Crucially, it was norm-referenced and grades were allocated by fixing proportions of each cohort to each grade rather than by setting absolute standards. Ten per cent would receive an A regardless of their absolute performance; a fixed proportion would fail regardless of what they knew.

The through-line here is clear. All of these methods of assessment are essentially about sorting. The machinery was designed to allocate people to schools, to classes, to occupations. It wasn’t designed to describe what students knew or could do.

Moreover, each escalation in the scientific legitimacy of the framework, from religious conformity to competitive merit to Burt’s psychometric intelligence, made the proxy relationship harder to see and therefore harder to challenge. The 11-plus was more difficult to oppose than aristocratic patronage precisely because it came dressed as science. The bell curve of attainment looked like natural science rather than policy.

What changed with the introduction of the GCSE — and why was that change genuinely significant?

The introduction of the GCSE exams in 1988, replacing the two-tiered O-Level and CSE system, represented a genuine philosophical rupture and, for the first time, the educational establishment recognised the proxy problem and replaced norm-referenced with criterion-referenced assessment. The explicit purpose of assessment shifted from sorting to describing. It asked not, ‘Where does this student rank?’ but, ‘What has this student achieved against a defined standard?’

Several arguments were put forward in support of this move. First was the equity argument. Under norm-referencing, roughly 40% of O-Level candidates were guaranteed to fail, not because of their absolute performance but because it was a statistical necessity. Criterion-referencing broke that logic: if all students met the standard, all students would pass. Second, the new system was more transparent and offered students both a clear sense of what they had to do in order to get a B and the reassurance that it would be the same from year to year. The third argument was pedagogical rather than political and offered diagnostic potential for teachers. If a grade describes what a student can do, rather than where they sit in a distribution, then it becomes useful information for teachers rather than just a sorting mechanism for institutions.

Unusually, these arguments won widespread support across the political divide.

The GCSE promised to replace sorting with describing - did it deliver?

I think ‘partially and imperfectly’ is probably a fair answer to that question. In practice, GCSE grade boundaries are not fully criterion-referenced. They continue to be managed statistically so that roughly similar proportions achieve each grade. There are valid reasons for this but it does mean the pure aspiration is somewhat compromised in practice.

Much more importantly, the shift created an unanticipated problem. As soon as the criteria became public and permanent, they became targets. Anyone who has read the earlier parts of this series about The Proxy War will be very disappointed if I don’t mention Charles Goodhart, he of the eponymous law, at this point. In case you need a reminder, this is usually summarised as:

When a measure becomes a target, it ceases to be a good measure.

Once the mark scheme became a public description of what quality looked like, it also became a specification of what teachers and students needed to produce. The effect was to narrow what was being rewarded, narrow what was taught and, progressively, the examiner’s professional judgement was crowded out as the criteria became more detailed and prescriptive and the body of exemplar work grew. To be clear, no-one was behaving badly here; teachers and students were responding perfectly rationally to an accountability system that judged them on outcome. But, when my wife, as a young teacher in a high-achieving girls’ grammar school, was instructed by her Head of Department to teach the top set Of Mice and Men instead of Jane Eyre, because ‘it’s easy and they’ll all get ‘A’s, it should have served as a warning about the direction of travel.

Essentially, teachers started to rely on the map and stopped looking at the terrain.

In order to protect their work, cartographers will sometimes create a fictitious place on their map so that, if it appears on a competitor’s map, it will serve as proof of a copyright infringement. A famous example was the addition of a tiny hamlet called Agloe to a 1930s map of New York created by Otto G Lindberg and Ernest Alpers. When they saw Agloe on a map published years later by Rand McNally, they prepared to sue. Rand McNally’s defence was unexpected but watertight: they had visited the place and had found the Agloe General Store, the Agloe Fishing Lodge and several houses on the exact site. People had seen Agloe on the map, visited it, concluded it was a real place precisely because it was on the map and decided to develop the land. Agloe was no longer a fiction; the map had summoned it into existence.

Something similar happened to the mark scheme. When it described a high-quality analytical response as demonstrating ‘sustained critical judgement’, ‘well-chosen evidence’ and ‘sophisticated vocabulary’ it was trying to describe genuine thinking. But, as teachers drilled their students to replicate these features, a new kind of writing came into existence - the exam essay. This might have the syntactic markers of critical engagement, the carefully memorised quotations deployed as evidence and enough long and slightly mis-used words to qualify as ‘sophisticated’ but it was derived directly from the mark scheme. I have coached hundreds of students to follow the formula and I’ve lost count of the students who have come to me asking me to help them make their writing “sound more A-Level”. Just as it did with Agloe, the map shaped the reality.

And yet, even as the exam essay genre colonised the space, one thing remained that the map could not replace: the professional who knew the terrain.

What has AI revealed about the mark scheme as a proxy?

Periodically, I upload an A-Level paper into an LLM and ask it to produce me two essays that 18 year old students might feasibly write in the time limit, one worthy of a high A grade and one that would score a solid C grade. Just as Ethan Mollick uses otters on a plane to gauge the development of AI image generation, this is my way of tracking the ability of AI to generate exam essays. Try it for yourself. I can’t tell the difference between AI and student essays anymore.

AI can produce work, rapidly and at scale, that satisfies mark scheme criteria without any of the cognitive processes that those criteria were designed to evidence.

AI isn’t really creating a new problem here. It is exposing a pre-existing one with vivid clarity. The proxy was already fragile. AI simply makes the fragility undeniable.

The EdTech market has responded by creating AI marking tools, systems that can apply the mark scheme to student work algorithmically, at speed and with absolute consistency. It looks like a solution to a real problem: the cost and inconsistency of human marking. The exam boards are already experimenting with them for exam marking and I’m sure their business managers in particular are secretly excited by the impact it could have on their bottom line.

I fear it is the wrong solution to the wrong problem. This doesn’t fix the proxy problem; it automates it.

We now have AI capable of accurately marking work that AI has written, satisfying criteria without understanding and producing grades that certify a learning process that has never taken place. The circularity is complete.

There is a crucial difference between what mark schemes were and what AI assessment risks creating. A mark scheme is a simplified map and will always be an imperfect representation of reality but it remains useful if it preserves structural similarity. It can do that because trained professionals, examiners and teachers, have walked all over the real terrain and they understand what it really looks like; they understand the relationship between the map and the territory.

An AI tool, trained on marked scripts, learns to imitate the decisions of those human markers but it doesn’t understand the judgement they were based on. It learns what high-scoring work looks like, not what high-scoring work is. And, because it is trained on student work that has already been shaped by the mark scheme, it gets ever better at recognising criterion-satisfying writing.

Once AI marking tools are deployed, they begin to generate new data. Scripts marked by AI tools enter the record and when the next generation of tools is trained, they are trained partly on data that previous AI helped to produce. The tool refines its model of quality against examples that its predecessors have already filtered through the same model.

Significantly, this circularity is not a hypothetical future risk. It is already happening as large language models are increasingly trained on text generated by previous large language models. This is known as model collapse. The outputs become progressively more distorted as the model learns from its own reflections rather than from the world.

As the datasets grow larger and larger, so they become increasingly self-referential and distanced from the territory they purport to describe. We are creating bigger and bigger maps and it is clear that we are on a trajectory towards a 1:1 map such as Borges imagined. This is not an intentional policy we have crafted and agreed. It is the logical consequence of optimising for consistency at scale when you remove the human discipline and expertise that connects the map to the real territory.

We can choose to build assessment systems that use AI to make our students’ thinking and understanding more visible, focusing firmly on process over product. Or, we can drift along the road towards a 1:1 map the size of the ‘Empire’.

In Borges’ story, the cartographers perfected their map to the point where it became useless. He begins his story in the past tense but ends it in the present. He leaves us in no doubt what that present looks like. The discipline of geography is gone. What remains are tattered ruins, inhabited by those with no knowledge of what they mean and no one left to ask.

In the Deserts of the West, still today, there are Tattered Ruins of that Map, inhabited by Animals and Beggars; in all the Land there is no other Relic of the Disciplines of Geography.